The Challenge

What MetekuAI Was Facing

MetekuAI operated AI decision pipelines that required human review for low-confidence outputs before results were committed to downstream systems. The review workflow, the AI pipeline, and the downstream systems it fed were all separate — with no integration between them. When an AI output was flagged for human review, the case had to be manually exported, reviewed in a disconnected tool, and the decision manually entered back into the downstream system. The process added 6-24 hours of latency to flagged decisions and introduced transcription errors when decisions were re-entered manually.

The Solution

What We Built

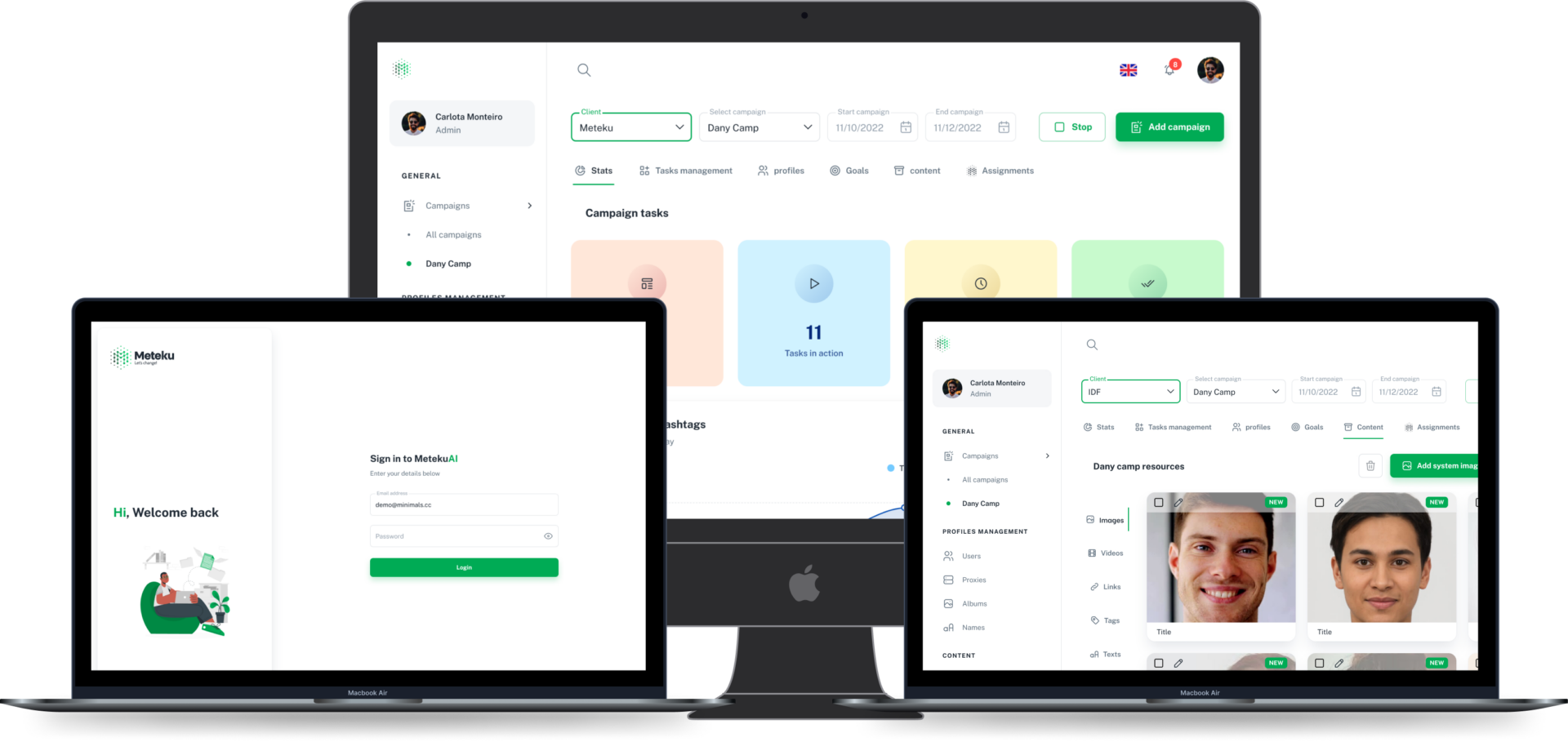

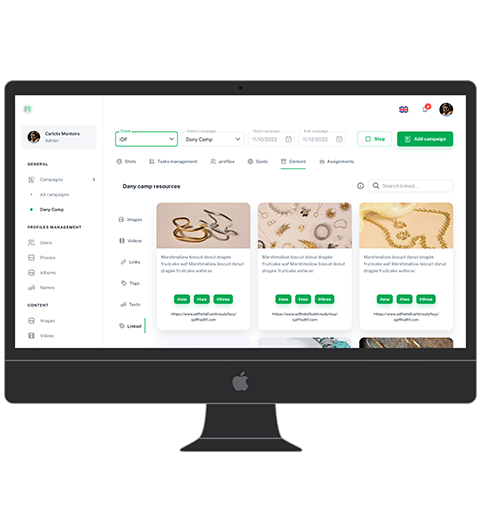

We designed an integration middleware that sat between the AI pipeline and downstream systems, intercepting low-confidence outputs and routing them to a structured human review queue. The review interface pulled full context from the AI pipeline — input data, model reasoning, confidence scores — and presented it to reviewers alongside the proposed output. Reviewer decisions were written back through the middleware to both the downstream system and the AI pipeline as training feedback signals. The middleware maintained a complete audit trail linking every downstream record to either an autonomous AI decision or a human-reviewed one.

Results